How We Found and Fixed a Recursive Deadlock in OpenTelemetry's Python SDK

In this blog

TL;DR

While integrating OpenTelemetry's logging pipeline into our production environment, we discovered a recursive deadlock in the OTel Python SDK that silently freezes all worker threads. We traced it using py-spy thread dumps, identified the exact line of code causing the recursion, and contributed a fix upstream. The PR was merged with 3 approvals from OTel maintainers and the fix will ship in the next release of opentelemetry-instrumentation-logging.

The Setup

We were rolling out OpenTelemetry for centralized log collection and trace correlation across our platform. The integration involved attaching OTel's LoggingHandler to our application's logging pipeline:

# Attached OTel log handler to application logger

logging.getLogger("clickpost").addHandler(otel_logging_handler)

# Enabled OTel logging format

LoggingInstrumentor().instrument(set_logging_format=True)

This meant every logging.info(), logging.debug(), or logging.warning() call would now pass through OTel's LoggingHandler before being emitted.

The change looked completely harmless. It passed code review. It worked in local testing. But under production load with real data, it triggered a latent bug in the OTel SDK.

The Symptoms

After deploying the change, we noticed something strange in our production environment. Gunicorn worker threads were becoming unresponsive. They were neither crashing, nor throwing errors, but silently freezing. Requests would time out, and the frozen state would persist until workers were manually restarted.

At first, we suspected the usual culprits:

-

Slow database queries - nothing unusual in our monitoring

-

Resource exhaustion - CPU and memory looked normal

-

Thread pool saturation - but the threads weren't busy, they were stuck

Nothing in our standard monitoring explained why every single thread was frozen simultaneously. A slow query would affect one thread, not all 20 at once.

That's when we reached for py-spy.

The Breakthrough - py-spy Thread Dump

py-spy is a sampling profiler that can attach to a running Python process and dump the call stack of every thread, without modifying the application, without any overhead.

py-spy dump --pid $(pgrep -f gunicorn)

What we saw immediately explained everything.

Across all 4 Gunicorn workers (each running 5 threads), every single request-handling thread was stuck at the exact same line:

Thread 340 (active): "ThreadPoolExecutor-2_0"

acquire (logging/__init__.py:927) ← STUCK (waiting for lock)

handle (logging/__init__.py:976)

callHandlers (logging/__init__.py:1706)

handle (logging/__init__.py:1644)

_log (logging/__init__.py:1634)

warning (logging/__init__.py:1501) ← OTel's internal warning

_clean_extended_attribute (attributes/__init__.py:209) ← OTel finds invalid attribute

__setitem__ (attributes/__init__.py:282)

__init__ (attributes/__init__.py:264)

__init__ (_logs/_internal/__init__.py:265)

_translate (_logs/_internal/__init__.py:637)

emit (_logs/_internal/__init__.py:656) ← OTel LoggingHandler

handle (logging/__init__.py:978) ← Lock FIRST acquired here

callHandlers (logging/__init__.py:1706)

...

do_log (utility/logging_utils.py:107) ← Our application code

20 threads. All frozen. All at acquire (logging/__init__.py:927). Waiting to acquire a logging handler lock that would never be released.

This wasn't a slow query. This was a deadlock.

The Deadlock - How It Works

The OpenTelemetry Python SDK has a known recursive logging deadlock bug (#3858, #3560, #4585).

Here's exactly what happens inside a single request thread:

Step 1: Our application code calls logging.info("fetching counter data")

Step 2: Python's logging framework picks up this log, walks the handler chain, and when it reaches OTel's LoggingHandler, it acquires the handler lock before calling emit()

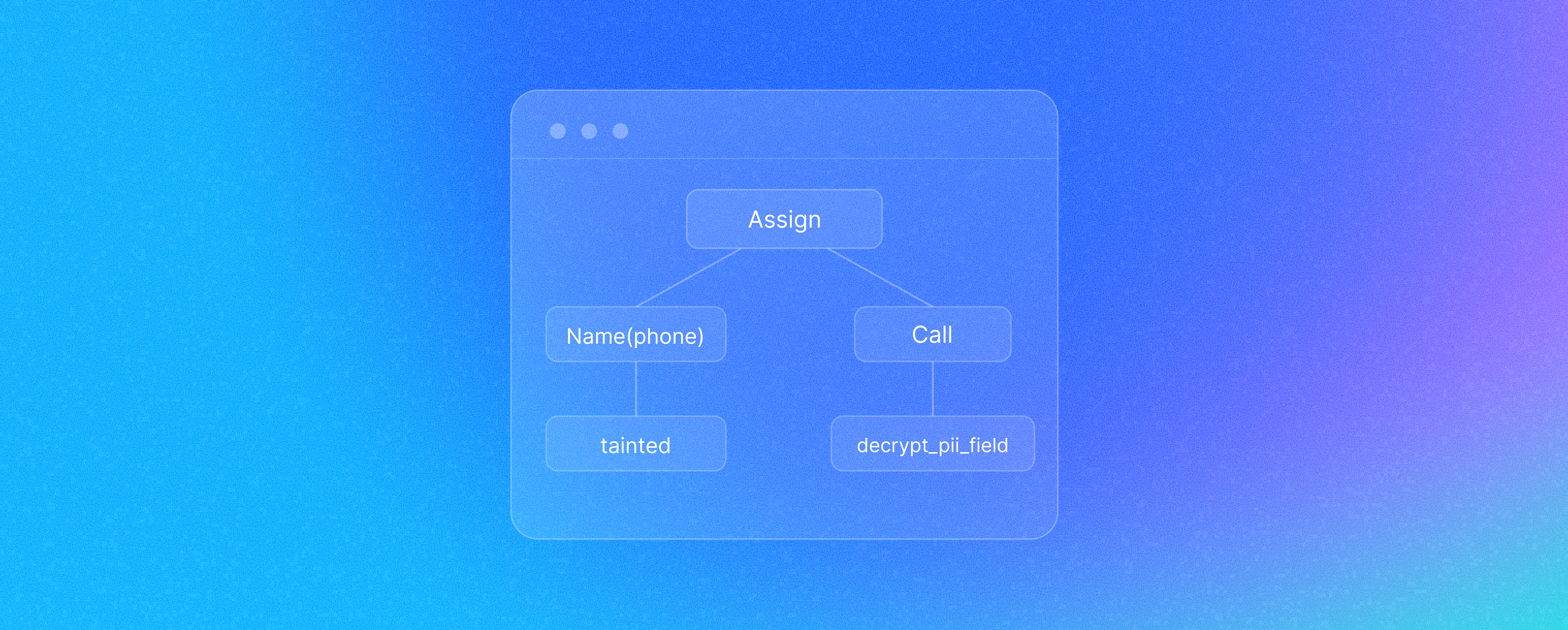

Step 3: OTel's emit() calls _translate() to convert the Python log record into OTel format. During translation, it creates BoundedAttributes and calls _clean_extended_attribute() to validate each attribute

Step 4 — THE BUG: Here's the exact function from the OTel SDK (opentelemetry/attributes/__init__.py):

def _clean_extended_attribute(

key: str, value: types.AnyValue, max_len: Optional[int]

) -> types.AnyValue:

if not (key and isinstance(key, str)):

_logger.warning("invalid key `%s`. must be non-empty string.", key) # line 203

return None

try:

return _clean_extended_attribute_value(value, max_len=max_len) # line 207

except TypeError as exception:

_logger.warning("Attribute %s: %s", key, exception) # line 209 ← THIS LINE

return None

Our py-spy stack trace pointed directly at line 209, not line 203. This told us the key was valid but the value was the problem. _clean_extended_attribute_value() handles None, primitives (str, int, float, bool), dicts, and lists safely. But for any other type — a datetime object, a set, a custom class instance like Django's WSGIRequest — it raises a TypeError. Django, Sentry, and Celery inject these non-standard types as log record attributes. The except block on line 208 catches the TypeError, and line 209 logs a warning about it — which is what triggers the recursive re-entry.

Step 5: That _logger.warning() on line 209 is itself a log call. _logger is a module-level logger that propagates to the root logger, which has our OTel LoggingHandler attached. So the warning re-enters the Python logging pipeline, walks the handler chain again, and tries to acquire the same handler lock

Step 6: But that lock is already held by Step 2 — by the same thread. The thread is now waiting for itself

Step 7: DEADLOCK. The lock is never released. This thread is frozen forever

The Cascade

Once one thread deadlocks, the logging handler lock is permanently held. Every other thread that tries to log; request threads, health check threads, background heartbeat threads; they all queue up behind the same lock and block indefinitely.

With a Gunicorn setup of 4 workers × 5 threads = 20 request threads, all 20 freeze. Zero requests can be served. The system is completely stuck until workers are restarted and even then, the deadlock recurs as soon as a log record with a non-primitive attribute is processed.

The Fix - Contributing to OpenTelemetry

After reverting the configuration to stabilize our environment, we turned to the real problem: this was a bug in the OTel SDK itself. Multiple GitHub issues documented it (#3858, #3560, #4585), but no fix had been merged into the logging handler.

We opened PR #4302 on the opentelemetry-python-contrib repository.

The PR Journey - 4 Rounds of Review

What started as a straightforward fix evolved through 4 rounds of reviewer feedback from OTel maintainers and contributors. Each round caught something we'd missed.

Round 1: threading.local()

Our initial fix used a threading.local() guard to detect re-entrant calls:

class LoggingHandler(logging.Handler):

_guard = threading.local()

def emit(self, record):

if getattr(self._guard, 'emitting', False):

return

self._guard.emitting = True

try:

# process the log

finally:

self._guard.emitting = False

Reviewer feedback (@DylanRussell): threading.local() doesn't handle async properly. In async frameworks like FastAPI, multiple coroutines share a single thread. threading.local() would let one coroutine's guard block another coroutine on the same thread.

Round 2: ContextVar + safe logger

Switched to ContextVar which isolates per async task, not just per thread. Also added a _propagate_false_logger — a dedicated logger with propagate=False for the recursion warning. Without this, the warning itself would propagate to the root logger, potentially hitting the OTel handler again.

Round 3: Naming

Reviewer feedback (@pmcollins): Renamed _emit_context to _is_emitting for clarity.

Round 4: The lastResort edge case

Reviewer feedback (@herin049): If a user overrides logging.lastResort to point to the OTel handler, our warning logger (with no handlers of its own) would fall back to lastResort and deadlock again. The fix: rename to _internal_logger and add an explicit StreamHandler, so it never consults lastResort.

The Final Fix

from contextvars import ContextVar

_internal_logger = logging.getLogger(__name__ + ".internal")

_internal_logger.propagate = False

_internal_logger.addHandler(logging.StreamHandler())

class LoggingHandler(logging.Handler):

_is_emitting: ContextVar[bool] = ContextVar("_is_emitting", default=False)

def emit(self, record):

if self._is_emitting.get():

_internal_logger.warning(

"LoggingHandler.emit detected recursive logging, "

"skipping to prevent deadlock."

)

return

token = self._is_emitting.set(True)

try:

logger = get_logger(record.name, logger_provider=self._logger_provider)

if not isinstance(logger, NoOpLogger):

logger.emit(self._translate(record))

finally:

self._is_emitting.reset(token)

How it works: Before processing a log record, check if we're already inside emit() on this execution context. If yes, skip the recursive call and log a warning to a safe internal logger (with propagate=False and its own StreamHandler, so it can never reach the OTel handler). The finally block ensures the guard always resets.

|

Round |

Feedback |

Change |

|

1 |

threading.local() doesn't handle async |

Switched to ContextVar |

|

2 |

Need a safe logger for recursion warning |

Added _propagate_false_logger with propagate=False |

|

3 |

Better naming |

Renamed _emit_context → _is_emitting |

|

4 |

User could override logging.lastResort |

Added explicit StreamHandler to _internal_logger |

The Merge

The PR received 3 approvals from reviewers with write access, passed 784 CI checks across Python 3.9 through 3.14, and was merged into the main branch.

Status: ✅ Merged

The fix will ship in the next release of opentelemetry-instrumentation-logging and will protect every Python application using OTel's logging integration from this deadlock.

Lessons Learned

py-spy is invaluable for production debugging

Without the thread dump, we would have kept chasing the wrong hypothesis. The dump made the problem immediately visible. Every thread frozen at the same lock, the recursive call chain clear as day.

# This single command told us everything

py-spy dump --pid $(pgrep -f gunicorn)

Keep py-spy in your production toolkit. When threads are stuck, it shows you exactly where.

Don't assume. Look at the threads

We initially suspected slow queries or resource exhaustion. The real problem was a deadlock, something no amount of scaling would fix. When your system freezes simultaneously across all threads, it's almost never a slow endpoint. It's a shared resource (lock, connection pool, file descriptor) that's poisoned.

Logging is not free

We tend to think of logging.info() as a no-op, but it acquires locks, traverses handler chains, and can trigger arbitrary code in handlers. In a threaded server, the logging lock becomes a global chokepoint. Any handler that does non-trivial work inside emit() is a potential bottleneck — or in this case, a deadlock.

Review feedback makes code better

Our initial fix used threading.local() and would have broken in async contexts. It also missed the logging.lastResort edge case. Four rounds of review from experienced maintainers turned a working fix into a robust one that handles edge cases we hadn't considered.

If you fix a bug in a library, contribute it back

We hit a known bug, understood it deeply through debugging, and wrote a fix with good tests. The production evidence; py-spy dumps, exact reproduction steps, thread analysis; made our PR credible. The open source community benefits, and so does every team that would have hit the same issue.

References

-

OTel Issue #3858: HTTP Worker Thread Blocked (Deadlock)

-

OTel Issue #3560: Recursive Logging Bug

-

OTel Issue #4585: Infinite Recursion in Log Shutdown

We discovered this bug while rolling out OTel logging in production, traced it with a single py-spy command, and contributed a recursion guard that will prevent the same deadlock for every Python application using OpenTelemetry's logging integration.

If you're using OTel's LoggingHandler with Python, upgrade to the latest opentelemetry-instrumentation-logging once this fix is released.

.png?width=879&height=549&name=Page%2074%20(1).png)